12 AI Character Generators That Keep the Same Face Every Time

The single hardest problem in AI image generation is character consistency. Generate a character once and the model produces a beautiful image. Generate them ten more times and the face drifts. By shot fifty, you have ten different people who all almost look like your character.

For creators producing serial content, this is the workflow killer. The tools that have solved it are the ones that have moved up the food chain in the past year.

Here are the twelve AI character generators that actually hold the same face across many shots, organized by what each one does best.

1. QWEN Image 2 Pro

QWEN has become the standout for character locking and reference-based generation. Commit a character image once, and subsequent generations hold the face within tolerance across dozens of shots. The pose can change. The environment can change. The clothing can change. The face stays.

For creator content with a recurring character across many images, QWEN is the leading workflow.

Best for: serial content with one or two recurring characters.

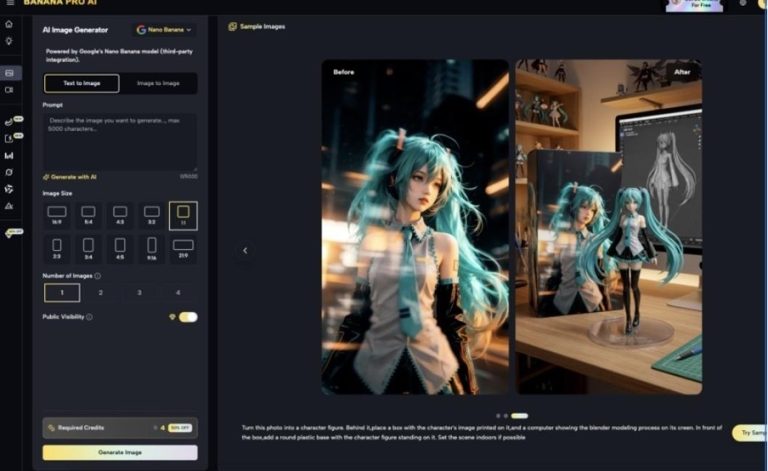

2. Nano Banana 2 with character preserve

Nano Banana 2’s character preserve mode is a close second to QWEN. The output quality sits in Midjourney’s tier, the editing tools are best in market, and once you commit a character image, the face holds across subsequent generations.

The advantage over QWEN is the editing toolkit. Inpainting, regional prompts, and outpainting all integrate with the character preservation, which is useful for fix-up passes on shots where one element didn’t land.

Best for: creators who do meaningful per-shot editing, not just generation.

3. Flux 2 with reference images

Flux 2’s character consistency is meaningfully better than Midjourney’s, and the reference image workflow lets you maintain a character across generations more reliably than pure prompt-based work allows.

It’s not as tight as QWEN’s lock, but for creators already invested in Flux 2 for its prompt adherence, the character workflow is good enough for most serial work.

Best for: Flux 2 users who want character continuity without switching models.

4. Stable Diffusion with LoRA training

Self-hosted Stable Diffusion paired with a custom-trained LoRA produces character consistency that rivals or exceeds the closed models. The investment is real (you train a model on your character) but the payoff is total control and zero per-image cost.

For creators committed to a long-running character across thousands of shots, the LoRA workflow is the most powerful option available, and a properly tuned AI Character Generator workflow built around it can match the output of any commercial alternative.

Best for: very high volume work, technical creators, privacy-sensitive workflows.

5. Midjourney with character reference

Midjourney’s character reference feature is real and works to a degree, but consistency is looser than the dedicated tools. The face holds within a session but drifts across sessions and across major prompt variations.

For creators committed to Midjourney’s aesthetic baseline, the character reference is useful for sequences within a single project but is not a true character lock.

Best for: Midjourney users producing limited-scope sequences.

6. Leonardo.ai custom models

Leonardo’s custom model training is built around character consistency. Train a model on your character (8-15 reference images), and that character becomes the default subject for any generation in that model. The face is exceptionally stable.

The training step is real overhead, but for brands or creators producing content around one recurring face, the long-term consistency is worth it.

Best for: brands and creators with one recurring character across hundreds or thousands of images.

7. Recraft V3

Recraft has gotten quietly good at character consistency, particularly for stylized aesthetics (illustrated, vector, or graphic styles rather than photorealism). For creators producing stylized character content, Recraft holds the character better than the photorealism-focused tools.

Best for: stylized illustration, vector art, character work that isn’t photorealistic.

8. Higgsfield character workflow

Higgsfield’s strength is cinematic atmosphere. Their character workflow inherits that strength, producing characters that feel like they belong in movie frames rather than AI-generated portraits. Consistency is good, especially within a project.

Best for: cinematic content, characters meant to look like movie stills.

9. Pika character lock for video

For video specifically, Pika’s character lock works on the same principle as the image-side tools but adapted for moving footage. Generate a character image first, lock it, then generate video shots that hold the character.

The video-specific application is meaningful because most image-side character locks don’t transfer cleanly into video without an additional step.

Best for: short-form video creators with a recurring character.

10. Wan with reference frames

Wan’s strength is talking-head and dialogue video, and its character handling is built for that use case. Provide reference frames of your character, and Wan holds the face across dialogue shots with strong lip sync and facial expression coherence.

For talking-head AI video with a recurring character, Wan combines character lock with the dialogue handling that makes the format work.

Best for: talking-head content with a recurring character speaking to camera.

11. Specialized AI influencer platforms

A category of dedicated tools has emerged for AI influencer content specifically. These platforms are built around one or two characters, with workflows optimized for serial social media content. They handle character consistency, posing, environment switching, and posting workflows in one place.

The output quality is usually mid-tier compared to the general-purpose tools, but the workflow specificity makes them faster for the narrow use case they serve.

Best for: AI influencer creators producing high-volume social content.

12. All-in-one creator studios with character lock

The all-in-one studios bundle 30+ image and video models under one workflow with character lock that works across the models. The character generated for an image carries into video, into companion shots, and across modalities.

For creators whose work crosses multiple shot types and multiple modalities, this is the structurally interesting category. Character continuity across image and video without copying assets between tools.

Best for: creators producing serial content with character continuity across image, video, and voice work.

How to actually pick

A simple framework that works:

- Character across many image shots, photorealistic: QWEN or Nano Banana 2

- Character across image and video, multi-modal work: all-in-one studio

- Stylized illustration character: Recraft V3 or Leonardo

- Talking-head video character: Wan

- Long-running character across thousands of shots: Stable Diffusion with LoRA training

- Brand character across many marketing pieces: Leonardo custom model

The category leaders for one slot are not the leaders for another. Match the tool to the kind of character work you actually do, not to the generic best-of list. The creators producing the most consistent serial character content in 2026 are the ones who have made this mapping carefully.